Deploy Hermes Agent | OpenClaw Alternative on Railway [May'26]

[May'26] Hermes AI agent – faster & smarter than OpenClaw & Claude agents.

hermes-agent

Just deployed

/opt/data

Deploy and Host Hermes AI Agent on Railway

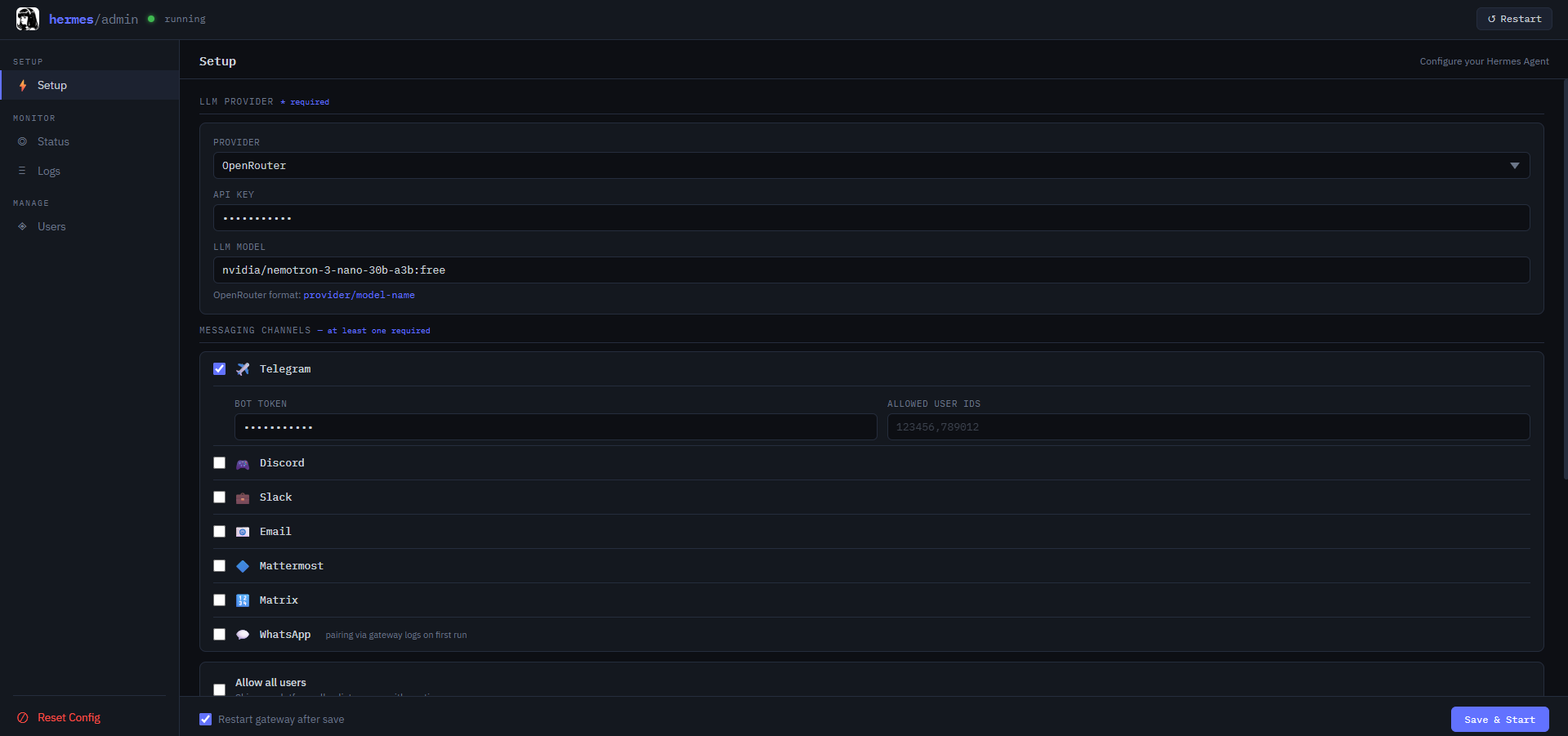

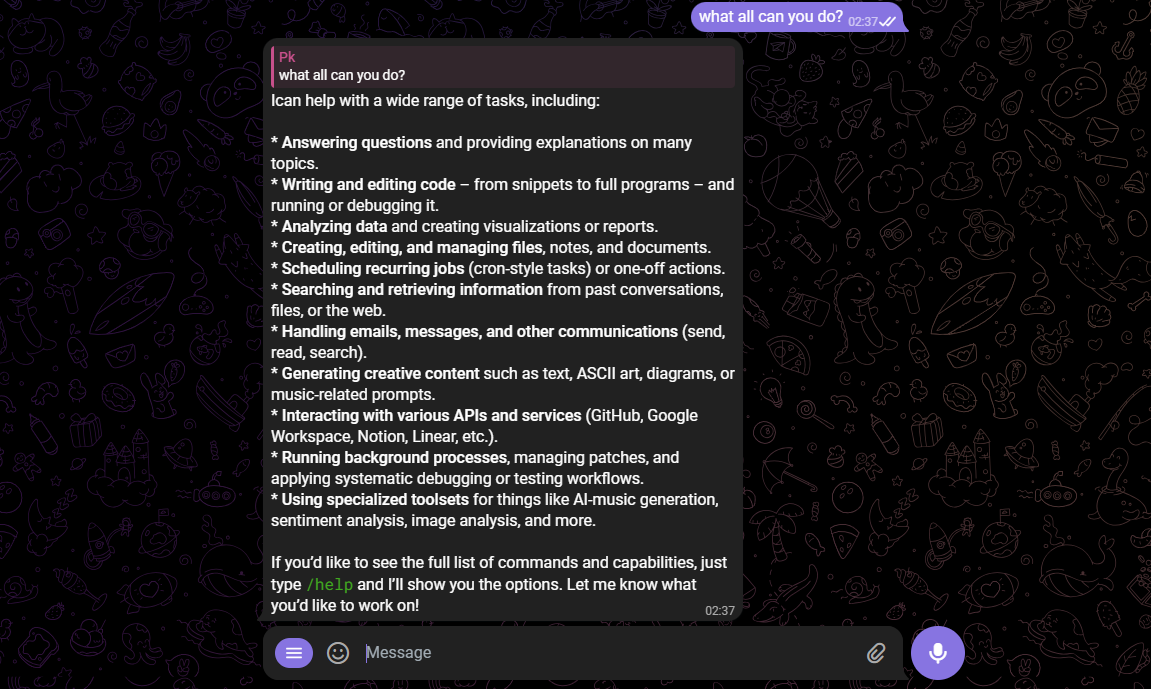

Hermes is an open-source AI agent framework built for production — fast inference, multi-step tool use, persistent memory, and LLM-agnostic design. Connect OpenAI, Anthropic, Groq, Ollama, or any OpenAI-compatible endpoint and deploy a fully functional AI agent in minutes with one-click Railway deployment. No DevOps expertise required.

What This Template Deploys

| Service | Purpose |

|---|---|

| Hermes Agent | The core AI agent runtime — handles LLM routing, tool execution, session management, and API serving on port 3000 |

| PostgreSQL | Persistent storage for agent memory, session state, and conversation history across restarts |

| Redis | Cache layer for session management and high-throughput request handling |

All services are pre-wired via Railway's private network. Credentials are injected automatically — no manual connection string configuration required.

About Hosting Hermes

Running a production AI agent requires a persistent runtime, database-backed memory, secure credential management, and a public HTTPS endpoint for integrations. Without a managed host, you're configuring Docker, inter-service networking, SSL, and environment variable handling manually.

Railway provisions all of it automatically. Hermes runs as an always-on agent service with vertical and horizontal scaling available via a single click. Persistent memory, secret management, and HTTPS are handled out of the box.

Typical cost: ~$5–10/month on Railway's Hobby plan for the full three-service stack. LLM API costs are separate and depend on your provider and usage volume.

Deploy in Under 5 Minutes

- Click Deploy on Railway and wait for the build to complete (~3–5 minutes)

- Set your

LLM_API_KEYand preferredLLM_PROVIDERin the Variables tab - Railway auto-detects

DATABASE_URLandREDIS_URL— Hermes initializes persistent memory on first boot - Open your Railway-assigned URL — Hermes is live and ready to handle requests

No SSH. No Docker configuration. No reverse proxy setup.

Common Use Cases

- AI customer support agent — deploy Hermes as a 24/7 support bot that resolves tickets, answers FAQs, and escalates complex issues with persistent session memory across conversations

- Autonomous research assistant — connect Hermes to web browsing and document tools to summarize sources and synthesize multi-step research without manual prompt chaining

- Code review and dev automation — integrate Hermes into your GitHub workflow to review pull requests, generate boilerplate, and write tests with full codebase context

- Self-hosted alternative to cloud AI agents — run your own LLM-powered agent on Railway infrastructure; your conversations and tool outputs never leave your environment

- Multi-agent orchestration — use Hermes as a coordinator in a multi-agent pipeline, delegating subtasks to specialized sub-agents while maintaining shared state via PostgreSQL

- LLM-agnostic automation — swap between OpenAI, Anthropic, Groq, or local Ollama models without changing agent logic; optimize for cost or performance per use case

Configuration

| Variable | Required | Description |

|---|---|---|

LLM_API_KEY | ✅ Required | API key for your chosen LLM provider |

LLM_PROVIDER | ✅ Required | Provider identifier — e.g. openai, anthropic, groq |

AGENT_NAME | Optional | Customize your agent's identity across sessions — defaults to Hermes |

DATABASE_URL | ✅ Auto-injected | PostgreSQL connection string — injected via Railway reference variable |

REDIS_URL | ✅ Auto-injected | Redis connection URI — injected via Railway reference variable |

PORT | Pre-set | Set to 3000 — auto-configured by Railway |

NODE_ENV | Pre-set | Set to production |

LLM_BASE_URL | Optional | Override for OpenAI-compatible endpoints — Ollama, vLLM, OpenRouter |

Hermes vs. Alternatives

| Feature | Hermes | OpenClaw | Standalone Claude Agent |

|---|---|---|---|

| Persistent memory | ✅ PostgreSQL / Redis | Limited | ❌ Session only |

| LLM provider flexibility | ✅ Any provider | Limited | ❌ Anthropic only |

| Self-hosted and private | ✅ Yes | Partial | ❌ No |

| Multi-step tool chaining | ✅ Yes | Basic | ⚠️ Manual setup |

| One-click Railway deploy | ✅ Yes | ❌ No | ❌ No |

| Cost control | ✅ Full — swap providers freely | Limited | Limited |

Dependencies for Hermes Hosting

- API key from at least one LLM provider (OpenAI, Anthropic, Groq, or OpenRouter)

- Railway account — Hobby plan (~$5–10/month) covers all three services

- Optional: OpenAI-compatible local model endpoint (Ollama, vLLM) for zero API cost inference

Deployment Dependencies

- Hermes GitHub Repository — source code and configuration reference

- Railway Documentation — platform guides for scaling and secrets

- OpenAI API Documentation — LLM backend integration

- Railway Volumes Documentation — optional persistent storage

Implementation Details

This template deploys the Hermes agent runtime alongside Railway-managed PostgreSQL and Redis, connected over private internal networking. Database and cache ports are never exposed publicly.

On first boot, Hermes auto-detects DATABASE_URL and REDIS_URL and initializes its memory

schema. Subsequent restarts preserve all session state and conversation history without

re-initialization. The agent API is served at your Railway public HTTPS domain on port 3000.

Frequently Asked Questions

How much does it cost to run Hermes on Railway? Approximately $5–10/month on Railway's Hobby plan for the three-service stack. LLM API costs are separate — using Groq or a local Ollama model can bring inference costs to near zero. There are no per-request fees from Railway itself.

Does Hermes retain memory between conversations? Yes. Session state and conversation history are stored in PostgreSQL. Hermes picks up exactly where it left off after restarts, redeploys, or version updates — without re-initialization.

Can I switch LLM providers without rewriting agent logic?

Yes. Set LLM_PROVIDER and LLM_API_KEY to your new provider and redeploy. Hermes is

LLM-agnostic — agent logic, tool definitions, and memory are decoupled from the provider layer.

Can I use a local model instead of a cloud API?

Yes. Set LLM_BASE_URL to any OpenAI-compatible endpoint — Ollama, vLLM, or LM Studio. This

enables fully air-gapped deployments where no data leaves your Railway environment.

Is my data private? Yes. Hermes is fully self-hosted on Railway. Conversations, tool outputs, and agent memory stay in your PostgreSQL instance. No data is sent to third-party analytics services. API keys are stored as encrypted Railway environment variables.

How do I update Hermes to a newer version? Update the Docker image tag or source reference in your Railway service settings and trigger a redeploy. PostgreSQL and Redis data are unaffected by the update.

Why Deploy Hermes on Railway?

Railway is a singular platform to deploy your infrastructure stack. Railway will host your infrastructure so you don't have to deal with configuration, while allowing you to vertically and horizontally scale it.

By deploying Hermes on Railway, you get a production-ready AI agent with persistent memory, LLM-provider flexibility, automatic HTTPS, and zero server administration — at the cost of Railway's base compute and your chosen LLM provider's API pricing.

Template Content

hermes-agent

Shinyduo/hermes-agent