Deploy Langfuse | Open Source LLM Observability (DataDog Alternative)

Self Host Langfuse on Railway. Traces, Evals & Prompt Management

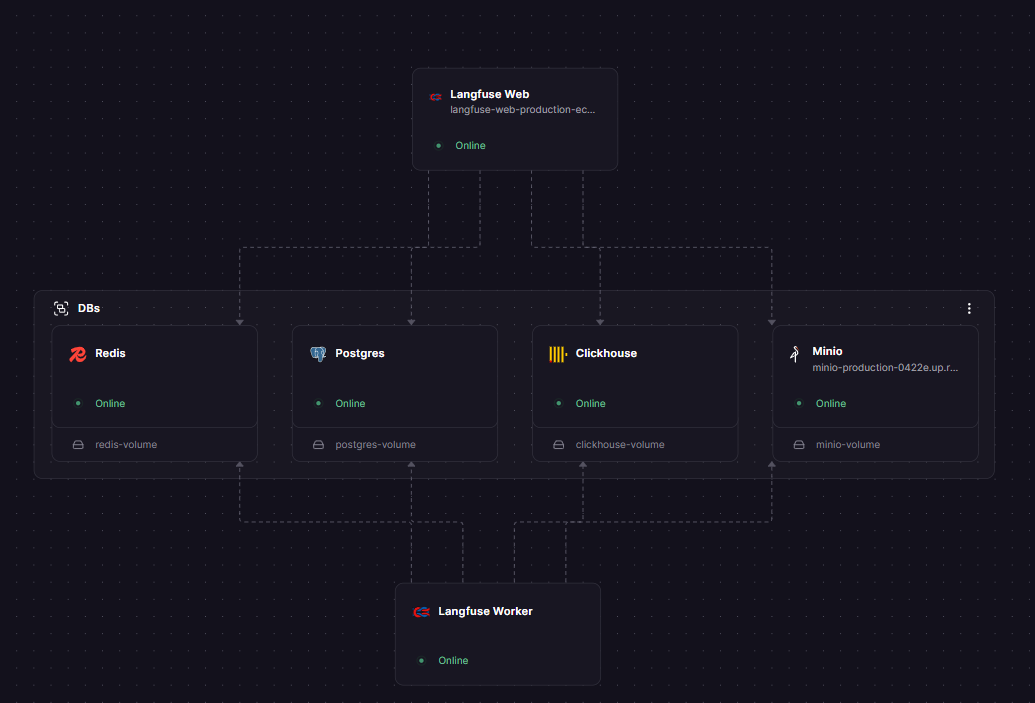

New Group

Just deployed

/var/lib/postgresql/data

Clickhouse

Just deployed

/var/lib/clickhouse

Redis

Just deployed

/data

Minio

Just deployed

/data

Langfuse Worker

Just deployed

Langfuse Web

Just deployed

Deploy and Host Langfuse on Railway

🪢 Langfuse is an open-source LLM engineering platform that gives teams full visibility into their AI applications — traces, evals, prompt management, cost tracking, and dashboards, all in one place.

Self-host Langfuse on Railway with a one-click deploy and get the exact same platform that powers Langfuse Cloud, running entirely on your own infrastructure.

🚀 Getting Started with Langfuse on Railway

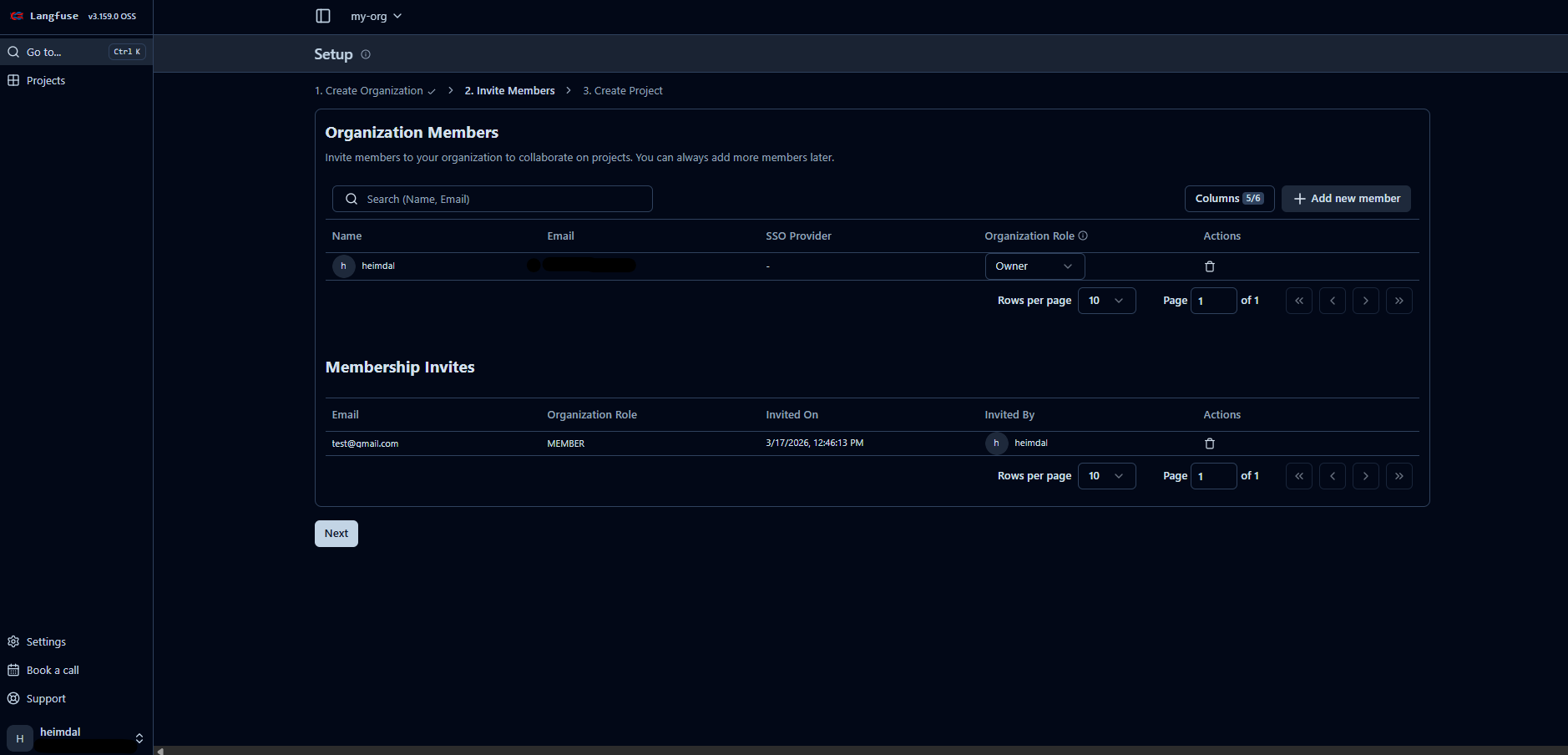

After your Railway deploy is live, there are a few important first-time setup steps to follow in order:

Step 1 — Sign up while registration is open 🔓

Set, AUTH_DISABLE_SIGNUP to false, meaning anyone with your URL can self-register. Open your langfuse-web Railway domain in a browser and create your admin account now, before locking it down.

Step 2 — Disable open signup 🔒

Once your account is created, go to your langfuse-web service on Railway, set AUTH_DISABLE_SIGNUP="true", and redeploy. After this, no one can self-register — your instance is private.

Step 3 — Set up SMTP for email invites 📧

To invite teammates, Langfuse needs to send emails. Add these two variables to langfuse-web and langfuse-worker, then redeploy:

EMAIL_FROM_ADDRESS="no-reply@yourdomain.com"

SMTP_CONNECTION_URL="smtps://user:password@smtp.yourprovider.com:465"

Common providers: SendGrid (smtp.sendgrid.net:465), Resend (smtp.resend.com:465), Postmark (smtp.postmarkapp.com:465).

Step 4 — Invite your team 👥 Go to Settings → Members → Invite User inside your org. Enter a teammate's email — they'll receive a link that creates an account scoped directly into your organization.

After setup, navigate to Settings → API Keys to generate your LANGFUSE_PUBLIC_KEY and LANGFUSE_SECRET_KEY for instrumenting your application.

# Install the Python SDK and start tracing

pip install langfuse

export LANGFUSE_PUBLIC_KEY="pk-lf-..."

export LANGFUSE_SECRET_KEY="sk-lf-..."

export LANGFUSE_HOST="https://your-langfuse-web.up.railway.app"

About Hosting Langfuse 🧠

Langfuse is purpose-built for LLM applications — it understands token usage, model parameters, prompt/completion pairs, multi-turn conversations, and agentic workflows natively. Traditional APM tools tell you if your service is up; Langfuse tells you whether your model is actually performing well.

Key features:

- 🔍 Tracing — nested traces for every LLM call, retrieval step, tool call, and custom logic with full context

- 📝 Prompt Management — version-controlled prompts with server + client-side caching and zero added latency

- 🧪 Evaluations — LLM-as-a-Judge, user feedback collection, manual labeling, and custom eval pipelines

- 📊 Datasets — test sets for pre-deployment benchmarks and continuous regression testing

- 🛝 Playground — jump directly from a bad trace to the prompt editor to iterate and fix

- 🔗 50+ integrations — OpenAI SDK, LangChain, LlamaIndex, LiteLLM, OpenTelemetry, and more

- 🔓 MIT licensed — no usage caps, no telemetry when self-hosted, full data ownership

The v3 architecture queues all incoming trace events to S3 (MinIO) immediately on receipt, then the worker ingests them into ClickHouse asynchronously. This means traffic spikes never cause trace loss or UI slowdowns.

Why Deploy Langfuse on Railway ✅

Railway handles the infrastructure so you can focus on your AI app:

- 🚂 One-click deploy — all 6 services pre-configured and private-networked

- 🔐 Private networking between services — ClickHouse, Redis, and MinIO never exposed publicly by default

- 📦 Persistent volumes — Postgres, ClickHouse, and MinIO data survives redeploys

- 💰 No per-event cloud fees — pay Railway infra costs only; no Langfuse usage billing on self-hosted

- 🔄 One-command upgrades — update the Docker image tag on web + worker and redeploy; migrations run automatically

Common Use Cases 🛠️

- Debugging RAG pipelines — trace every retrieval step alongside the LLM call to pinpoint where answers go wrong

- Prompt version rollouts — A/B test prompt variants in production and score outputs before committing a new version

- Agent observability — visualize multi-step agentic workflows as graphs; see exactly where decisions were made and why

- Cost monitoring — track token usage and cost per user, project, or model across your entire team's production traffic

Dependencies for Langfuse 📦

- langfuse-web —

docker.io/langfuse/langfuse:3(github.com/langfuse/langfuse) - Postgres — Railway managed Postgres (v17)

- ClickHouse —

clickhouse/clickhouse-server(OLAP analytics store) - Redis — Railway managed Redis (v7)

- MinIO —

minio/minio(S3-compatible blob storage)

⚙️ Environment Variables Reference

| Variable | Description | Required |

|---|---|---|

AUTH_DISABLE_SIGNUP | Set true to block open self-registration | Recommended |

CLICKHOUSE_CLUSTER_ENABLED | Must be false for single-node Railway deploys | ✅ |

Deployment Dependencies 🔗

- Docker Hub: hub.docker.com/r/langfuse/langfuse

- Official docs: langfuse.com/self-hosting

- Config reference: langfuse.com/self-hosting/configuration

💵 Is Langfuse Free to Self-Host?

Yes — Langfuse is fully open-source under the MIT license and free to self-host with no usage limits. There are no caps on traces, events, users, or data retention when running your own instance. You pay only for Railway infrastructure (compute + storage).

Langfuse Cloud (the managed version) has a free Hobby tier (50k events/month, 2 users, 30-day retention), a Core plan at $29/month, and a Pro plan at $199/month.

🖥️ Minimum Hardware Requirements for Langfuse

| Component | Minimum | Recommended |

|---|---|---|

| CPU | 2 vCPU | 4+ vCPU |

| RAM | 4 GB | 8–16 GB |

| Storage (Postgres) | 10 GB | 20 GB+ |

| Storage (ClickHouse) | 20 GB | 50 GB+ (grows with trace volume) |

| Storage (MinIO) | 10 GB | 50 GB+ |

| Node.js (containers) | Bundled in image | Bundled in image |

ClickHouse is the most resource-intensive component — it needs adequate RAM for analytics queries. For low-traffic instances (< 10k traces/day), 4 GB total RAM across the Railway project is workable. High-traffic production setups should scale ClickHouse to at least 4 GB RAM of its own.

🏠 Self-Hosting Langfuse Outside Railway

Docker Compose (quickest local setup):

git clone https://github.com/langfuse/langfuse.git

cd langfuse

docker compose up

# UI available at http://localhost:3000

Kubernetes (recommended for production):

helm repo add langfuse https://langfuse.github.io/langfuse-k8s

helm repo update

helm install langfuse langfuse/langfuse \

--set langfuse.nextauth.secret="your-secret" \

--set langfuse.salt="your-salt" \

--set langfuse.encryptionKey="your-64-hex-key" \

--set postgresql.auth.password="your-pg-password"

📊 Langfuse vs LangSmith vs Helicone

| Feature | Langfuse | LangSmith | Helicone |

|---|---|---|---|

| Open source | ✅ MIT | ❌ Closed source | ✅ Open source |

| Self-hostable | ✅ Free | ❌ Enterprise only | ✅ Free |

| Framework agnostic | ✅ | ⚠️ LangChain-first | ✅ |

| Prompt management | ✅ Full | ✅ Full | ⚠️ Basic |

| LLM-as-a-Judge evals | ✅ | ✅ | ❌ |

| Datasets & experiments | ✅ | ✅ | ❌ |

| Proxy-based integration | ❌ SDK-based | ❌ SDK-based | ✅ URL-swap |

| Built-in caching | ❌ | ❌ | ✅ |

| Cloud free tier | 50k events/mo | 5k traces/mo | 100k req/mo |

Langfuse is the strongest choice when you need full data ownership, framework flexibility, and a complete prompt-to-eval workflow. LangSmith wins for pure LangChain stacks. Helicone is faster to set up if you only need cost tracking and a proxy layer.

❓ FAQ

What is Langfuse? Langfuse is an open-source LLM engineering platform for tracing, prompt management, evaluations, and analytics. It helps teams understand what's happening inside their AI applications — beyond just "the API returned 200" — by capturing every prompt, completion, tool call, and retrieval step with full context.

Why does Langfuse need ClickHouse? ClickHouse is a columnar OLAP database designed for fast analytical queries on high-volume append-only data — exactly what trace analytics requires. Langfuse v3 migrated from Postgres-only to a Postgres + ClickHouse architecture to support sub-second dashboard queries even with millions of traces. You can't remove it from the stack.

Why is MinIO included? Langfuse buffers all incoming trace events to object storage (MinIO) before the worker ingests them into ClickHouse. This decoupling means traffic spikes don't cause trace loss or timeouts. MinIO also stores media attachments (images, audio) that SDKs upload directly — which is why it needs a public URL.

Does Langfuse support SSO? Yes — Google, GitHub, Azure AD, Okta, Auth0, AWS Cognito, Keycloak, and JumpCloud are supported via environment variables. SSO enforcement (blocking username/password login) is an Enterprise Edition feature requiring a license key.

Can I migrate from LangSmith or Helicone to Langfuse? Langfuse provides Python scripts for migrating traces, prompts, and datasets between instances (including from Langfuse Cloud to self-hosted). Direct migration tooling from LangSmith or Helicone doesn't exist officially, but both platforms export data in formats that Langfuse's API can ingest with custom scripts.

Template Content

Langfuse Worker

langfuse/langfuse-worker:3Langfuse Web

langfuse/langfuse:3AUTH_DISABLE_SIGNUP

Left empty intentionally. Set to 'false' for first signup; it becomes admin, then set true to disable further registrations (see setup guide: https://railway.app/template/your-template)

Clickhouse

clickhouse/clickhouse-server:24Redis

redis:8.2.1Minio

minio/minio