Deploy RAGFlow | Open Source RAG Engine

Self-host RAGFlow. Chat with your PDFs, contracts, papers & more

Redis

Just deployed

/data

MinIO

Just deployed

/data

Elasticsearch

Just deployed

RAGFlow

Just deployed

MySQL

Just deployed

/var/lib/mysql

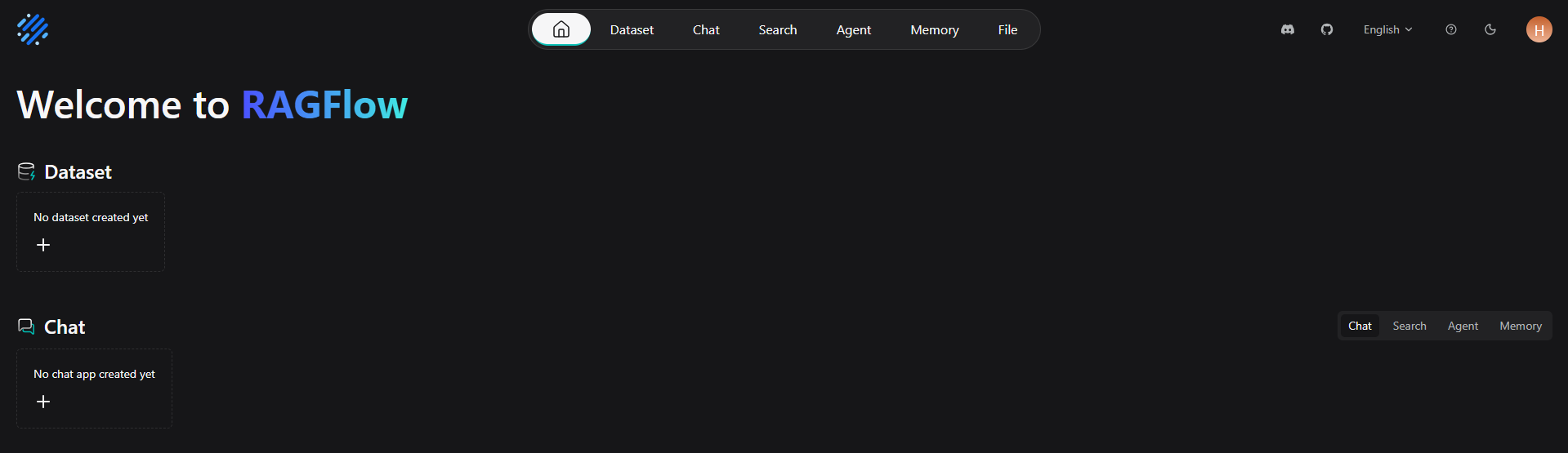

Deploy and Host RAGFlow on Railway

RAGFlow is an open-source retrieval-augmented generation engine built around deep document understanding — it ingests PDFs, Word docs, scanned images, and tables, chunks them with the DeepDoc parser, indexes the embeddings in Elasticsearch, and serves grounded LLM Q&A with traceable citations.

This Railway template self-hosts a complete RAGFlow stack with MySQL, Redis, MinIO object storage, and Elasticsearch vector index pre-wired so you can deploy RAGFlow on Railway in one click and start uploading your own knowledge base immediately.

Getting Started with RAGFlow on Railway

After the template finishes deploying, open the public URL Railway generated for the RAGFlow service. You will land on the RAGFlow login page — click Sign Up and create the first account, which automatically becomes the admin tenant. Once logged in, go to Model Providers under the avatar menu and add at least one LLM provider key (OpenAI, Anthropic, DeepSeek, Ollama endpoint, or any OpenAI-compatible base URL). Then switch to Knowledge Base, click Create knowledge base, upload your first PDF or DOCX, wait for parsing to complete, and start a chat that retrieves grounded answers from your documents. To lock further signups after onboarding your team, set REGISTER_ENABLED=0 and redeploy.

About Hosting RAGFlow

RAGFlow combines a high-quality document parser (DeepDoc) with classical IR scoring and modern dense retrieval, then layers grounded answer generation with explicit citations on top. Unlike chunk-and-pray RAG stacks, every answer is traced back to the exact paragraph in the original document so users can verify before trusting.

Key features of self-hosted RAGFlow:

- DeepDoc parser — handles PDFs, DOCX, XLSX, PPTX, scanned images, HTML, Markdown, and tables with layout-aware chunking

- Hybrid retrieval — Elasticsearch BM25 + dense vector search with rerankers

- Grounded citations — every answer references the source chunk

- Multi-tenant — each user/team has isolated knowledge bases

- Agent workflows — visual flow builder for multi-step retrieval pipelines

- OpenAI-compatible API — drop-in replacement for chat completions with RAG

Why Deploy RAGFlow on Railway

Railway provisions the full multi-service stack (5 services) from a single template:

- One-click deploy of MySQL, Redis, MinIO, Elasticsearch, and RAGFlow

- Private networking between services — no exposed database ports

- Persistent volumes for MySQL, Redis, MinIO, and Elasticsearch data

- Automatic HTTPS on the RAGFlow public domain

- Pay-per-use pricing — scale memory and storage as your knowledge base grows

Common Use Cases

- Engineering knowledge base — index runbooks, design docs, and incident postmortems for instant grounded answers

- Customer support automation — chat over product manuals and FAQs with citations back to source pages

- Legal and compliance research — query contracts, regulations, and policy documents with paragraph-level traceability

- Academic research assistant — chat with research papers, extract methodology, compare findings across PDFs

Dependencies for RAGFlow on Railway

This template provisions five services that work together:

- RAGFlow —

infiniflow/ragflow:v0.25.2— main API and web UI - MySQL — Railway-managed MySQL — stores users, tenants, knowledge base metadata

- Redis — Railway-managed Redis — task queue and chat session cache

- MinIO —

quay.io/minio/minio:latest— S3-compatible object store for uploaded documents - Elasticsearch —

elasticsearch:8.11.3— vector and keyword index for retrieval

Environment Variables Reference for Self-Hosted RAGFlow

| Variable | Purpose |

|---|---|

DOC_ENGINE | Vector backend — elasticsearch, infinity, or opensearch |

MINIO_HOST | MinIO hostname without port (RAGFlow appends :9000 itself) |

ES_HOST / ELASTIC_PASSWORD | Elasticsearch endpoint and basic-auth password |

MYSQL_DBNAME / MYSQL_PASSWORD | RAGFlow metadata DB |

REGISTER_ENABLED | 1 allows new signups; set to 0 after onboarding |

DEVICE | cpu on Railway (no GPUs); use external embedding APIs for production |

MEM_LIMIT | Bytes — soft cap for parser workers (default 8073741824 = 8 GB) |

Deployment Dependencies

- GitHub: infiniflow/ragflow

- Docker Hub: infiniflow/ragflow

- Documentation: ragflow.io/docs

- License: Apache 2.0

Hardware Requirements for Self-Hosting RAGFlow

| Resource | Minimum | Recommended |

|---|---|---|

| RAM (RAGFlow main) | 4 GB | 8 GB |

| RAM (Elasticsearch) | 2 GB | 4 GB |

| RAM (MySQL + Redis + MinIO) | 2 GB combined | 4 GB combined |

| Total RAM | 8 GB | 16 GB |

| CPU | 4 vCPUs | 8 vCPUs |

| Storage | 50 GB (MinIO + ES) | 200 GB+ |

| Runtime | Docker | Docker |

Self-Hosting RAGFlow on Railway

Click Deploy on Railway, wait ~6 min for the RAGFlow image (~3.4 GB) to pull, then visit the generated public URL. The first user to sign up becomes the admin.

To self-host RAGFlow with Docker Compose locally for development:

git clone https://github.com/infiniflow/ragflow.git

cd ragflow/docker

docker compose -f docker-compose.yml up -d

To call the RAGFlow OpenAI-compatible API after creating an API key in the UI:

curl https://your-ragflow.up.railway.app/api/v1/chats_openai//chat/completions \

-H "Authorization: Bearer " \

-H "Content-Type: application/json" \

-d '{"model":"model","messages":[{"role":"user","content":"Summarize the latest uploaded contract"}]}'

How Much Does RAGFlow Cost to Self-Host?

RAGFlow itself is fully open-source under the Apache 2.0 license — no license fees, no per-seat pricing, no feature gates. On Railway you pay only for the underlying compute (CPU, RAM, egress) and storage. A small team running a single tenant typically fits in $20–40/month of Railway resources, plus whatever LLM API spend you incur (OpenAI, Anthropic, or self-hosted Ollama). InfiniFlow does offer a managed RAGFlow Cloud tier if you prefer not to operate the stack yourself.

RAGFlow vs Dify vs Onyx

| Feature | RAGFlow | Dify | Onyx (Danswer) |

|---|---|---|---|

| Document parser quality | DeepDoc — best in class for PDFs/tables | Standard chunking | Connector-focused |

| Vector backend | Elasticsearch / Infinity / OpenSearch | Postgres + Weaviate | Vespa |

| Visual agent builder | Yes | Yes | No |

| Source citations | Paragraph-level | Document-level | Yes |

| License | Apache 2.0 | Apache 2.0 (with limits) | MIT |

Pick RAGFlow when document parsing fidelity matters most. Pick Dify if you need a broader low-code AI app builder. Pick Onyx if your priority is wiring up SaaS connectors (Slack, Confluence, Notion).

FAQ

What is RAGFlow and why self-host it? RAGFlow is an open-source RAG engine that turns your private documents into a searchable, chat-able knowledge base with citations. Self-hosting on Railway keeps your documents inside your own infrastructure — no third-party SaaS sees your data, and you can plug in any LLM API or local model.

What does this Railway template deploy? Five services: the RAGFlow app (web UI + API), a Railway-managed MySQL for metadata, Railway-managed Redis for task queues, MinIO for uploaded document storage, and Elasticsearch 8.11.3 for the vector and keyword index. Networking, volumes, and cross-service env vars are pre-wired.

Why does the template include Elasticsearch and MinIO? Elasticsearch stores the chunk embeddings and full-text index — RAGFlow's hybrid retrieval needs it. MinIO is the S3-compatible object store where your raw uploaded files (PDFs, Word docs, images) live. Both are required for any non-trivial RAGFlow deployment.

How do I add an LLM API key in self-hosted RAGFlow? After signing up, click your avatar → Model Providers → pick a provider (OpenAI, Anthropic, DeepSeek, Azure OpenAI, Ollama, OpenAI-compatible) and paste the key. Keys are stored per-tenant in the MySQL database — never as environment variables.

Can I run RAGFlow on Railway without a GPU?

Yes. The v0.25.x image is "slim" and ships with no embedded models. Set DEVICE=cpu and use external embedding/LLM APIs (OpenAI's text-embedding-3-small, DeepSeek, or a remote Ollama endpoint). On-host CPU embedding works for small corpora but is slow.

How do I disable signups after onboarding my team in RAGFlow?

Set REGISTER_ENABLED=0 on the RAGFlow service in the Railway dashboard and redeploy. Existing accounts continue to work; the signup form returns an error.

Why does the first deploy take 6+ minutes?

The infiniflow/ragflow:v0.25.2 image is approximately 3.4 GB compressed. Railway's builder pulls it once and caches it — subsequent redeploys (env var changes, fixes) are much faster.

Template Content