Deploy MinerU | Document Parsing API

Self-host MinerU PDF parser with an OpenAI-compatible API

Just deployed

/data

Deploy and Host MinerU on Railway

MinerU is an open-source document parsing engine from Shanghai AI Lab's OpenDataLab that converts PDFs, images, and Office documents into LLM-ready Markdown and JSON. Self-host MinerU on Railway when you want a private, OCR-capable document parser for your RAG pipeline, knowledge base ingestion, or agentic workflow — no per-page cloud OCR fees and no third-party telemetry on your documents.

This Railway template deploys MinerU's mineru-api FastAPI server in pure CPU mode (pipeline backend) using a custom Python 3.12 Dockerfile, with a persistent volume for the Hugging Face model cache and a public HTTPS URL on port 8000. Pipeline models (~1–2 GB of layout, OCR, table, and formula recognizers) lazy-download on the first request and are cached on the volume for all subsequent boots.

Getting Started with MinerU on Railway

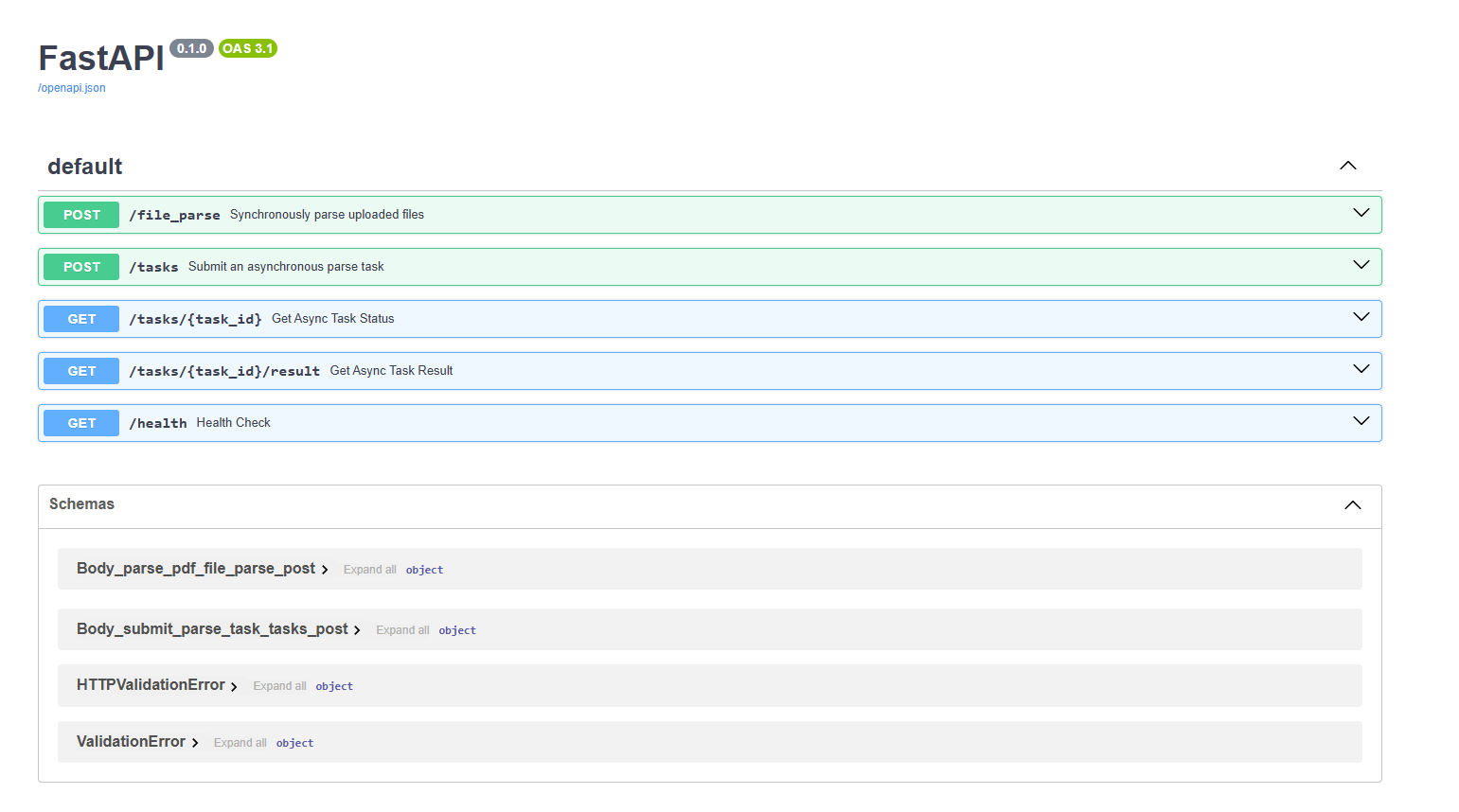

After deploy, the public URL exposes a FastAPI service. Visit https:///docs for the live Swagger UI to try the API in your browser. The first call to POST /file_parse triggers a one-time pipeline-model download (~1–2 GB) into the volume and may take 60–120 seconds; subsequent parses are fast (cached models, no re-download).

Important: the API's default backend is hybrid-auto-engine, which requires a GPU. On this CPU-only deploy you must send backend=pipeline with every request — otherwise parsing fails. Example:

curl -X POST https:///file_parse \

-F "[email protected]" \

-F "backend=pipeline" \

-F "lang_list=en" \

-F "return_md=true"

The response includes the extracted Markdown, optional middle-JSON, content lists, and image base64 chunks depending on the return_* flags.

About Hosting MinerU

MinerU emerged in 2024 as the best open-source all-rounder for PDF-to-Markdown conversion, beating Marker, Unstructured, and Docling on extraction accuracy across academic papers, reports, and scanned documents. It uses a multi-stage pipeline: PaddlePaddle layout detection → orientation classification → SLANet+ table structure → unimernet formula recognition → reading-order assembly → Markdown emission.

Key features:

- OCR for 84+ languages including English, Chinese, Japanese, Korean, Arabic, Cyrillic, Devanagari, Thai

- Formula recognition rendered as LaTeX inline in the Markdown

- Table extraction preserved as HTML inside the Markdown

- Reading-order reconstruction for multi-column layouts and footnotes

- Multiple output formats: raw Markdown, structured JSON, image attachments, ZIP bundle

- Async task API with

/tasksendpoints for long-running batch jobs - Sliding-window memory optimization for long documents

Why Deploy MinerU on Railway

A managed Railway deployment removes the rough edges of self-hosting:

- Public HTTPS URL with auto-generated TLS certificates

- Persistent volume keeps the Hugging Face model cache warm across redeploys

- Memory-bump in one click if you process larger PDFs

- No CUDA driver setup — the CPU pipeline backend works on Railway out of the box

- Variables, logs, metrics, and rollbacks in one dashboard

- Pay only for the container hours you use

Common Use Cases

- RAG ingestion — convert a PDF library into chunked Markdown for embedding into a vector store

- Knowledge base seeding — bulk-extract internal docs (PDFs, slides, spreadsheets) into a wiki

- Document QA — feed structured Markdown to an LLM agent that needs to cite specific sections

- Academic paper parsing — extract abstracts, equations (LaTeX), and figure captions for literature review tools

Dependencies for Self-Hosted MinerU

- MinerU API (

mineru[core]>=3.0.0) — FastAPI server with the parsing pipeline. Built onpython:3.12-slimwith PaddlePaddle, PyTorch (CPU), pdfminer.six, transformers.

Environment Variables Reference

| Variable | Purpose |

|---|---|

PORT | HTTP port the FastAPI server binds to |

HF_HOME | Hugging Face cache directory — point at the volume to persist models |

MINERU_MODEL_SOURCE | huggingface (lazy download) or local (pre-baked) |

MINERU_DOWNLOAD_ON_BOOT | 1 pre-downloads pipeline models in the entrypoint; 0 skips |

MINERU_TOOLS_CONFIG_JSON | Path to mineru's auto-generated config file |

HF_TOKEN | Optional — only needed if you switch to a gated HF model source |

Deployment Dependencies

- Runtime: Docker (Railway), Python 3.12

- Source: github.com/opendatalab/MinerU

- Strategy D repo: github.com/praveen-ks-2001/mineru-railway

- Docs: opendatalab.github.io/MinerU

Hardware Requirements for Self-Hosting MinerU

The pipeline (CPU) backend works on modest hardware for small documents. Larger or batch workloads benefit from more RAM.

| Resource | Minimum | Recommended |

|---|---|---|

| CPU | 2 vCPU | 8 vCPU |

| RAM | 4 GB (small PDFs only) | 16 GB |

| Storage | 5 GB volume (pipeline models) | 20 GB volume (multi-tenant) |

| Runtime | Docker | Docker |

For production batch parsing or formula-heavy academic papers, GPU acceleration on a different host is roughly 15× faster. This Railway deploy is best for low-volume, on-demand parsing.

Self-Hosting MinerU with Docker

The official OpenDataLab image is GPU-only. For CPU-only deployments (Railway, local laptops without CUDA), build a slim image:

FROM python:3.12-slim

RUN apt-get update && apt-get install -y --no-install-recommends \

fonts-noto-core fonts-noto-cjk libgl1 libglib2.0-0 libgomp1 tini curl \

&& rm -rf /var/lib/apt/lists/*

RUN pip install --no-cache-dir "mineru[core]>=3.0.0"

ENV HF_HOME=/data/huggingface

EXPOSE 8000

CMD ["mineru-api", "--host", "0.0.0.0", "--port", "8000"]

Then send a parse request. The following is a Python client example using requests:

import requests

with open("paper.pdf", "rb") as f:

response = requests.post(

"https:///file_parse",

files={"files": f},

data={"backend": "pipeline", "lang_list": "en", "return_md": "true"},

timeout=600,

)

result = response.json()

print(result["results"]["paper"]["md_content"])

Is MinerU Free to Self-Host?

MinerU is fully open source under the AGPL-3.0 license — no per-page fees, no API key billing, no usage caps. On Railway you only pay for container compute and the volume's storage. A small CPU deploy with 8 GB RAM and a 10 GB volume costs roughly the same as any other small Railway service; you avoid commercial OCR APIs (AWS Textract, Google Document AI, Azure Form Recognizer) entirely.

FAQ

What is MinerU and why self-host it? MinerU is an open-source PDF and document parsing engine that converts PDFs, images, DOCX, PPTX, and XLSX files into LLM-ready Markdown or JSON with OCR, layout detection, table extraction, and LaTeX formula recognition. Self-hosting on Railway gives you private parsing for sensitive documents and avoids per-page commercial OCR billing.

What does this Railway template deploy?

A single service running a custom Python 3.12 Docker image with mineru[core] installed, the mineru-api FastAPI server bound to port 8000, a persistent volume mounted at /data for the Hugging Face model cache, and a public HTTPS URL.

Why is this CPU-only and not GPU?

Railway containers do not have GPU access. The official opendatalab/mineru Docker image is built on vllm/vllm-openai and requires CUDA; this template uses a slim Python base image with mineru[core] for the CPU pipeline backend. Throughput is much lower than GPU inference but fine for low-volume on-demand parsing.

Why must I send backend=pipeline on every request?

The mineru-api server's default backend is hybrid-auto-engine, which requires a GPU. The backend is selected per request via form parameter, not at server startup. Always pass backend=pipeline (or pipeline via the backend form field) to use the CPU code path.

How long does the first request take?

The first POST /file_parse call lazy-downloads pipeline models (~1–2 GB) from Hugging Face into the volume. Plan for 60–120 seconds on the first request. All subsequent requests after the same deploy reuse the cache. Redeploys also reuse the cache because models live on the volume.

Does this template support GPU acceleration if I move to a Pro plan?

Railway does not offer GPUs at any tier as of this writing. To use MinerU's GPU backends (hybrid-auto-engine, vlm-auto-engine), run it on a CUDA-enabled host elsewhere (RunPod, Lambda, your own GPU box) and keep this Railway service for the pure-CPU pipeline path.

Can I parse non-English documents?

Yes — pass the lang_list form parameter with one of the documented language groups (ch, en, korean, japan, arabic, cyrillic, devanagari, etc.). Pipeline backend OCR supports 84+ languages.

MinerU vs Marker vs Unstructured

| Feature | MinerU | Marker | Unstructured |

|---|---|---|---|

| Markdown quality | Best (academic + reports) | Good (general purpose) | Good (simple docs) |

| OCR languages | 84+ | 90+ (via Surya) | Multilingual |

| Formulas as LaTeX | Yes | Yes | No |

| Tables as HTML | Yes | Yes (LLM-optimized) | Limited |

| CPU sec/page | ~3.3 | ~16 | ~4.2 |

| License | AGPL-3.0 | GPL (commercial restricted) | Apache 2.0 |

MinerU wins on extraction accuracy and formula handling for academic content. Marker is competitive on general docs but slower without GPU. Unstructured is the easiest to integrate but produces less structured output for complex layouts.

Template Content