Deploy OpenHands | Open Source Devin Alternative

Self-host the OpenHands AI software engineer agent on Railway

OpenHands

Just deployed

/.openhands

Deploy and Host OpenHands on Railway

OpenHands (formerly OpenDevin) is an open-source AI software engineering agent that writes code, runs commands, browses the web, and ships pull requests on your behalf — like a team member you can task in plain English. Self-host OpenHands on Railway when you want a private, single-tenant agent that uses your own LLM API keys instead of a hosted SaaS.

This Railway template deploys OpenHands as a single service with RUNTIME=local (no Docker socket needed), an nginx HTTP basic-auth front, and a persistent volume for sessions and settings. Pick your LLM provider (Anthropic, OpenAI, OpenRouter, Bedrock, or any litellm-compatible endpoint) once, and the agent is ready to work.

Getting Started with OpenHands on Railway

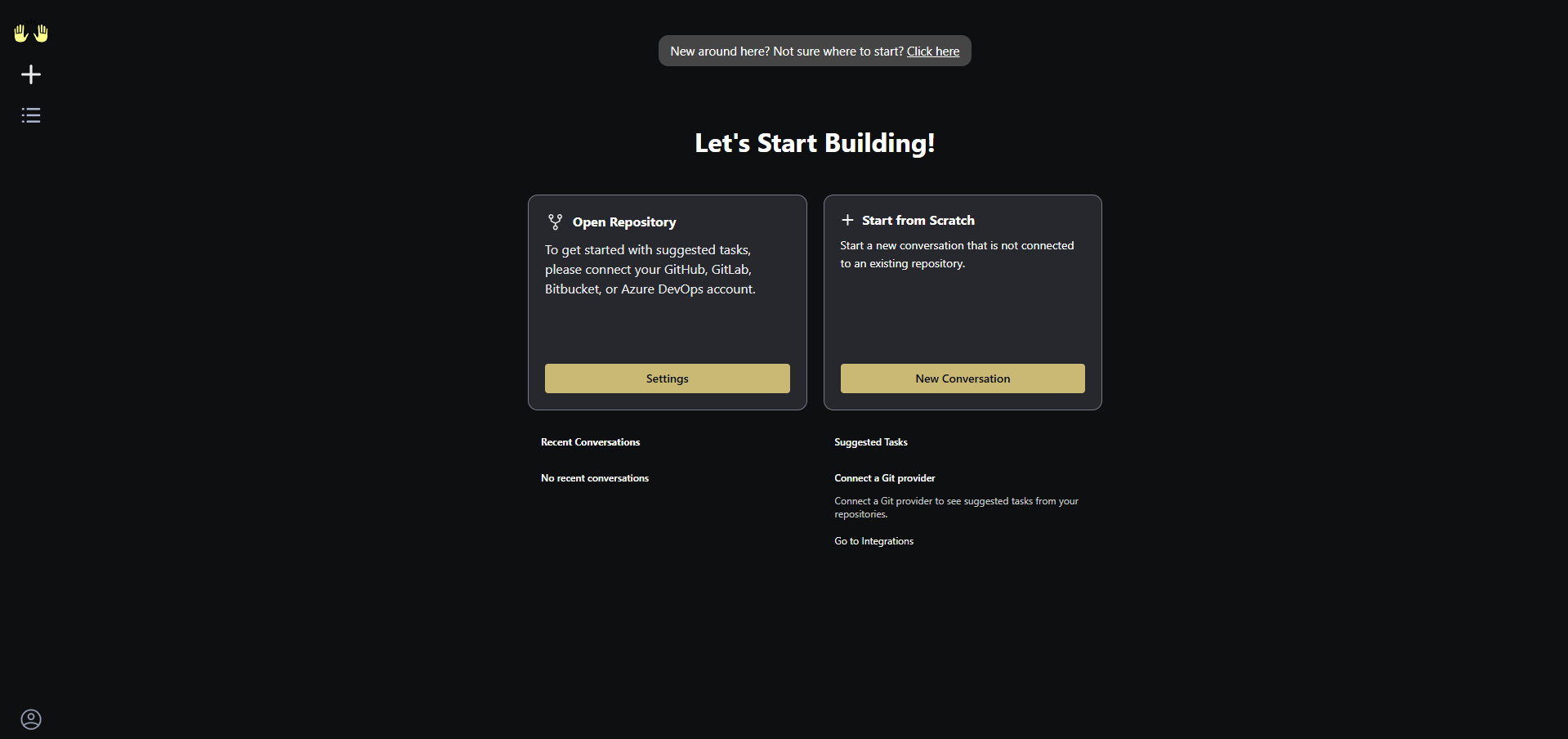

Once the deploy is healthy, open the public Railway URL and log in with the basic-auth credentials you set (BASIC_AUTH_USER / BASIC_AUTH_PASSWORD). On first load, click the gear icon and configure your LLM — paste an API key, pick a model (Claude Sonnet 4.6 and GPT-5 are good defaults), and save. From there you can start a new conversation, attach a GitHub repository, and prompt the agent with anything from "fix this failing test" to "scaffold a new FastAPI endpoint with auth and tests". All conversations and uploaded files persist on the mounted Railway volume across redeploys.

About Hosting OpenHands

OpenHands is a permissively licensed (MIT) AI agent framework backed by All Hands AI and a research community at the University of Illinois Urbana-Champaign. It scores at the top of the SWE-bench Verified leaderboard and is used by engineering teams that want a code-native agent they can audit and run on their own infrastructure.

Key features:

- Plug-in any LLM (Anthropic, OpenAI, Google, AWS Bedrock, OpenRouter, local Ollama, etc.) via litellm

- Built-in tools: shell, file editor, web fetch, git, GitHub PR creation

- Multi-conversation workspace with persistent chat history

- Browse-and-attach GitHub repos directly from the UI

- Headless / API mode for programmatic agent runs

This template runs the agent in local-runtime mode — the agent process executes inside the Railway container itself rather than spinning up a per-conversation Docker sandbox (Railway does not expose a Docker socket). That means it boots fast and is fine for trusted single-user / small-team use.

Why Deploy OpenHands on Railway

Railway gives you a one-click home for OpenHands without the Docker-in-Docker setup the upstream README assumes:

- One service, one volume, no Compose file to maintain

- nginx basic-auth wrapper baked in — no public, unauthenticated agent

- Persistent storage for conversations and settings

- HTTPS with auto-renewing certs at a real domain

- Pay only for what the agent actually consumes

Common Use Cases

- Pair-programming on a private repo — point OpenHands at your GitHub, give it a task, review the PR

- Automated bug triage — drop in a stack trace, let the agent reproduce, locate, and propose a fix

- One-off scripting — "scrape this site and turn it into a CSV" without spinning up a local dev environment

- On-call helper — read logs, query an API, summarize an incident, all from the OpenHands UI

Dependencies for OpenHands on Railway

- Base image:

docker.openhands.dev/openhands/openhands:1.7.0(extended with nginx + tmux) - Strategy D source: https://github.com/praveen-ks-2001/openhands-railway

Environment Variables Reference

| Variable | Description |

|---|---|

BASIC_AUTH_USER | Username for the nginx basic-auth gate |

BASIC_AUTH_PASSWORD | Password for the nginx basic-auth gate |

RUNTIME | Agent runtime mode — must be local on Railway |

LLM_API_KEY | Optional: pre-fill an LLM API key (otherwise set in UI) |

LLM_MODEL | Optional: pre-fill a default model name |

FILE_STORE_PATH | Volume path where sessions and settings live |

Deployment Dependencies

- Runtime: Python 3.13 + Node frontend bundled in the official image

- GitHub: https://github.com/All-Hands-AI/OpenHands

- Docker registry:

docker.openhands.dev/openhands/openhands - Self-hosting docs: https://docs.all-hands.dev/usage/installation

Hardware Requirements for Self-Hosting OpenHands

| Resource | Minimum | Recommended |

|---|---|---|

| CPU | 1 vCPU | 2 vCPU |

| RAM | 2 GB | 4 GB |

| Storage | 5 GB volume | 10 GB volume |

| Runtime | Python 3.13 (bundled) | Python 3.13 (bundled) |

The container itself is light; most resource cost comes from outbound LLM API calls, which are billed by the model provider, not Railway.

Self-Hosting OpenHands on Railway

The official upstream docker run recipe assumes a Docker socket on the host so OpenHands can spawn per-conversation sandbox containers. Railway does not expose /var/run/docker.sock, so the agent runs in RUNTIME=local mode inside the container. The Strategy D image extends the official one to bake in tmux (required by the local runtime) and add an nginx basic-auth front so the public URL is not open to the internet.

The minimal local-equivalent (using the same image as this template):

docker run -p 3000:3000 \

-v openhands-data:/.openhands \

-e RUNTIME=local \

-e SANDBOX_USER_ID=0 \

-e SERVE_FRONTEND=true \

docker.openhands.dev/openhands/openhands:1.7.0

To extend with nginx auth like this template does:

FROM docker.openhands.dev/openhands/openhands:1.7.0

USER root

RUN apt-get update && apt-get install -y --no-install-recommends \

tmux nginx apache2-utils curl \

&& rm -rf /var/lib/apt/lists/*

COPY nginx.conf /etc/nginx/conf.d/openhands.conf

COPY start.sh /usr/local/bin/start.sh

RUN chmod +x /usr/local/bin/start.sh

ENTRYPOINT []

CMD ["/usr/local/bin/start.sh"]

How Much Does OpenHands Cost to Self-Host?

OpenHands itself is free and MIT-licensed — no seat fees, no usage tiers. On Railway you pay only the underlying compute and storage (typically a few dollars a month for personal use). The bulk of real cost comes from the LLM provider you wire up: a heavy Claude Sonnet day might be $1–$5 of API spend. Compare that with hosted Devin-class SaaS at $20–$500 per seat per month and the savings are obvious for individual developers and small teams.

FAQ

What is OpenHands and why self-host it? OpenHands is an open-source AI software engineering agent — the closest open analogue to Cognition's Devin or Cursor's background agent. Self-hosting on Railway keeps your code, prompts, and LLM API keys inside infrastructure you control instead of a vendor's SaaS.

What does this Railway template deploy?

A single Railway service running the official docker.openhands.dev/openhands/openhands:1.7.0 image, extended with tmux (needed by RUNTIME=local) and an nginx basic-auth wrapper. A 5 GB volume mounts at /.openhands for chat history and settings.

Why is HTTP basic auth required?

The container exposes a shell-execution agent at a public Railway URL. Without auth, any visitor could burn through your LLM API key or run arbitrary commands. The template's start.sh refuses to launch when BASIC_AUTH_USER / BASIC_AUTH_PASSWORD are unset — fail-closed, not fail-open.

Which LLM providers can I use with self-hosted OpenHands on Railway?

Anything litellm supports — Anthropic Claude, OpenAI, Google Gemini, AWS Bedrock, Azure OpenAI, OpenRouter, Mistral, Ollama, vLLM, and local LM Studio endpoints. Configure via the UI or pre-fill LLM_API_KEY and LLM_MODEL env vars.

Can OpenHands open pull requests on my GitHub repos? Yes. Connect a GitHub personal access token in the UI settings; the agent can clone, branch, commit, and push PRs as part of normal task execution.

How do I upgrade OpenHands to a newer version?

Bump the FROM docker.openhands.dev/openhands/openhands: line in the Strategy D repo's Dockerfile, push to GitHub, and Railway redeploys automatically. The volume preserves your conversation history across upgrades.

Template Content

OpenHands

praveen-ks-2001/openhands-railwayLLM_MODEL

Optional: pre-fill default model name (e.g. claude-sonnet-4-6)

LLM_API_KEY

Optional: pre-fill LLM provider API key

LLM_BASE_URL

Optional: pre-fill custom LLM endpoint URL